INFLXD.

Previously

Inflexion Transcribe

Inflexion Transcribe

Financial Transcription Services: What They Are, Who Needs Them, and Why Accuracy Matters

Financial transcription services turn market-sensitive audio into reliable data. Learn who needs them, why accuracy matters, and what to look for in a vendor.

Beatrice Eyales

Apr 29, 2026

LSEG produces approximately 40,000 financial event transcripts per year. Kensho’s transcription models were trained on over 100,000 hours of professionally curated financial audio. S&P Global holds its transcripts to a 99% accuracy SLA.

These aren’t transcription vendors. They’re data infrastructure companies that happen to produce transcripts.

Now consider the gap between how those organizations treat transcription and how most procurement teams buy it. You’ve got three vendor proposals on your desk. One promises the lowest per-minute rate. Another touts “AI-powered speed.” A third leads with compliance certifications. None of them tell you what happens when their system renders “EBITDA margin of 14.2%” as “a bit of margin of 40.2%,” or tags the CFO’s remarks to the wrong speaker, or drops a critical qualifier from a forward-looking statement on an earnings call. And none of them explain how those errors compound once the transcript feeds your search platform, your AI summarization pipeline, or a client’s investment thesis.

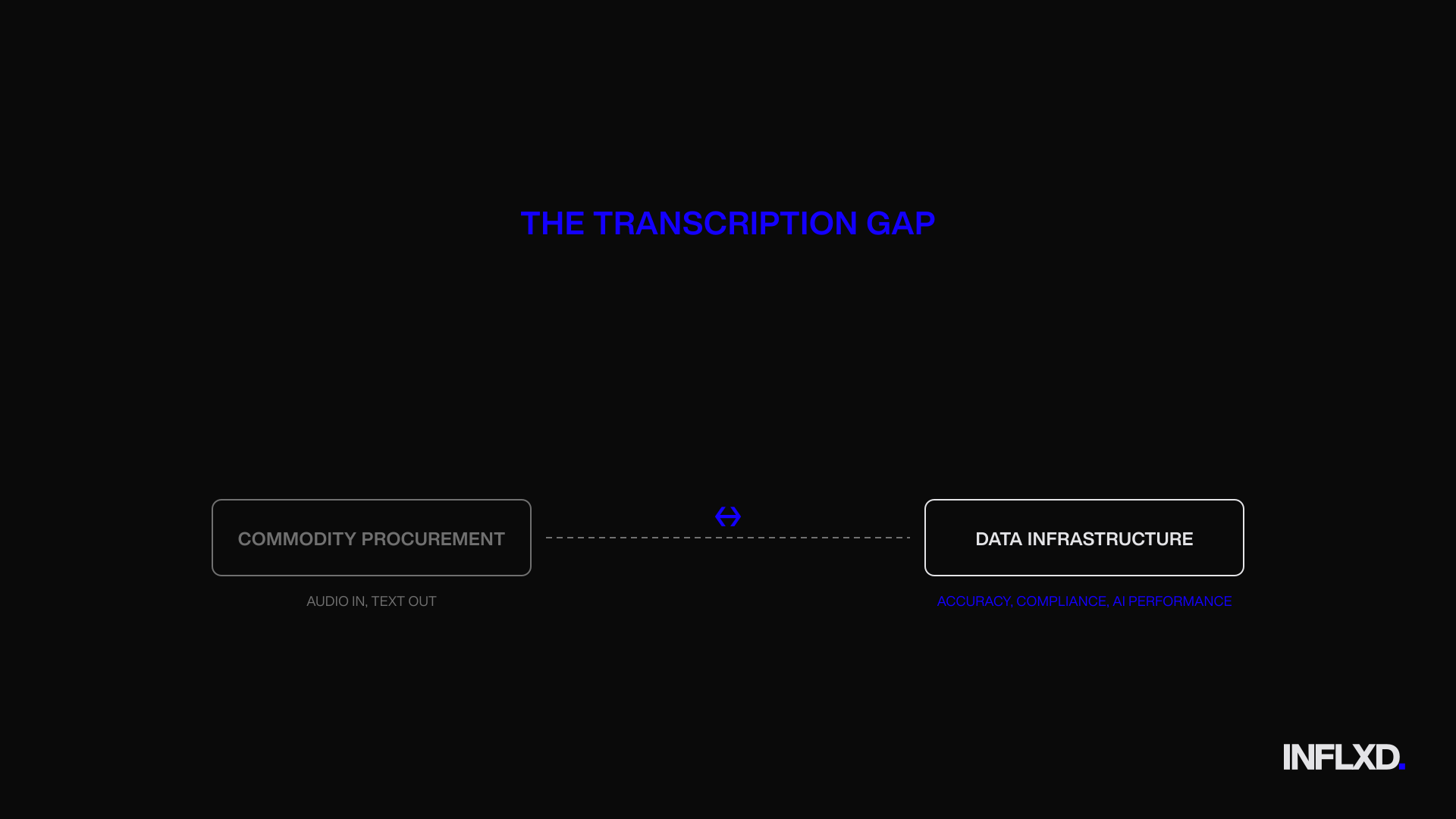

That’s the core problem. Most organizations still procure financial transcription services as if they’re buying a commodity: audio in, text out, lowest cost wins. But financial transcription isn’t an administrative task. It’s the process of converting market-sensitive spoken content into searchable, reusable, and auditable financial data. The organizations that treat it as such gain a structural advantage in accuracy, compliance readiness, and downstream AI performance. Those that treat it as commodity text generation inherit compounding risk they can’t see until it surfaces in a compliance review, a flawed model output, or a client-facing error that erodes trust.

This piece breaks down what financial transcription actually involves (and how it differs from general transcription), who depends on it across the financial ecosystem, why financial audio creates uniquely demanding accuracy requirements, where AI-only approaches fall short, and what separates a production-grade vendor from a commodity one.

What Financial Transcription Services Actually Are (and How They Differ from General Transcription)

The term “transcription” covers a lot of ground. A podcast interview, a medical dictation, a legal deposition, and an earnings call all require audio-to-text conversion. But the similarity ends there. Financial transcription is a specialized discipline: the conversion of market-sensitive spoken content into accurate, structured, and reusable text records that function as data infrastructure, not just documents.

The source material includes earnings calls, expert network interviews, investor briefings, M&A diligence calls, analyst days, and conference presentations. Every one of these carries domain-specific demands that generic transcription workflows aren’t built to handle. And when the output feeds downstream systems (search platforms, AI summarization tools, compliance archives, client-facing research products), the quality bar isn’t “good enough to read.” It’s “accurate enough to act on.”

Defining Financial Transcription: From Audio to Structured Data

General transcription services optimize for two things: speed and cost on conversational English. That’s a reasonable model for meeting notes, marketing interviews, or podcast episodes. It’s NOT a reasonable model for content where a misheard number can misrepresent a company’s guidance, or where a dropped qualifier changes the meaning of a forward-looking statement.

Financial transcription requires a fundamentally different set of capabilities. Numerical precision is the most obvious one. Basis points, revenue figures, guidance ranges, margin percentages, share counts. These aren’t decorative details. They’re the substance of the communication. When a CFO says “free cash flow conversion of 92%,” the transcript needs to say exactly that. Not “93%.” Not “free cash flow of 92%.”

Then there’s entity accuracy. Financial audio is dense with proper nouns that generic language models routinely mishandle: company names, ticker symbols, product names, drug names in healthcare-focused calls, executive names with unusual spellings. A general transcription vendor processing thousands of hours across dozens of industries simply doesn’t maintain the entity libraries required for this content.

Domain terminology adds another layer. Terms like non-GAAP adjusted EBITDA, organic growth rates, and comparable store sales aren’t in the vocabulary of general-purpose ASR models. They’re not even consistently handled by consumer-grade AI transcription tools that perform well on everyday speech.

Why General Transcription Vendors Fall Short on Financial Audio

The gap isn’t about effort or intent. It’s structural. Most transcription vendors serve broad markets. Their models, their QA processes, and their workforce training are optimized for the median use case, not the financial one. That’s a perfectly rational business decision for those vendors. But it means the output they deliver to financial clients consistently falls short on the dimensions that matter most.

LSEG’s research offers a telling data point here. The organization produces approximately 40,000 financial event transcripts per year and ultimately replaced an external transcription vendor with an in-house ASR pipeline. Why? Because generic transcription couldn’t meet their quality bar at that scale. The decision to build internally (with all the cost and complexity that entails) reflects a conclusion that the vendor ecosystem wasn’t delivering production-grade financial transcription. When one of the world’s largest financial data providers decides it’s better to build than buy, that tells you something about the state of commodity transcription services applied to financial content.

The Core Components of Production-Grade Financial Transcripts

So what does production-grade actually look like? It’s a specific set of outputs that separate financial transcription from commodity text:

Verbatim accuracy at 99%+ on domain content. Not 99% on conversational filler. On the terms, numbers, and entities that carry meaning.

Speaker identification with names, titles, and affiliations. “Speaker 1” and “Speaker 2” labels are useless in a multi-analyst Q&A. The transcript needs to attribute remarks to the right person with the right role at the right firm.

Timestamps at every speaker turn. This enables precise navigation, clip extraction, and audit trails.

Structured formatting. Table of contents, section headers, keyword metadata. The transcript should function as a navigable data object, not a wall of text.

Clean verbatim style. Filler words and false starts are removed, but meaning is never altered. This is a careful editorial judgment, not a find-and-replace operation.

These components aren’t optional extras. They’re what make a transcript usable as financial data rather than just a rough record of what was said. And they’re precisely the capabilities that generic transcription vendors, built for speed and volume across all industries, aren’t structured to deliver consistently on financial audio.

The sections that follow explore who depends on this level of quality, why financial audio creates uniquely difficult transcription challenges, and what happens when the output falls short.

Who Needs Financial Transcription Services: Use Cases Across the Industry

Financial transcription isn’t a niche requirement for a single type of organization. It’s a foundational capability for an entire ecosystem of firms that create, distribute, and act on spoken financial intelligence. The use cases vary, but the common thread is clear: every one of these organizations depends on transcript accuracy to protect their product, their clients, and their reputation. And in most cases, the transcription vendor ecosystem hasn’t kept pace with what these firms actually need.

Expert Networks and Financial Data Platforms

Expert networks like GLG, AlphaSights, Third Bridge, and Guidepoint facilitate thousands of calls per month between institutional investors and domain experts. Each call generates raw intelligence that flows through a transcription pipeline before reaching an analyst’s desk. The resulting transcripts aren’t just records of conversations. They’re client deliverables, searchable research assets, and inputs to transcript libraries that compound in value over time.

The challenge is that expert network calls are among the hardest audio to transcribe well. Speakers span every industry vertical, carry diverse accents, and use terminology that shifts from biotech to semiconductors to energy infrastructure within a single week’s call volume. The transcription vendor ecosystem has historically underserved these firms with commodity solutions that weren’t built for this diversity. Generic ASR models trained on earnings calls or broadcast English don’t have the domain breadth to handle a former supply chain VP discussing rare earth mineral sourcing, followed by a clinical trial investigator walking through Phase III endpoints.

Financial data platforms face a related but distinct problem. Organizations like Bloomberg, LSEG, S&P Global, Tegus, and AlphaSense treat transcripts as structured data products, not documents. Their transcripts feed search indexes, NLP models, sentiment analysis engines, and entity-tagged databases. When a transcript contains an error, it doesn’t just create a bad reading experience. It corrupts downstream data.

The investments these firms make tell the story. Kensho built its Scribe transcription system on over 100,000 hours of professionally curated financial audio. S&P Global holds its transcripts to a 99% accuracy SLA. LSEG produces roughly 40,000 financial event transcripts per year and ultimately moved transcription in-house because external vendors couldn’t meet their quality requirements at scale. These decisions reflect a shared conclusion: transcript quality is product quality, and the available vendor options weren’t built for the complexity of financial audio. is product quality, and the available vendor options weren’t built for the complexity of financial audio.

Investor Relations Teams and Earnings Call Transcription

Investor relations teams sit at the intersection of corporate communications and regulatory obligation. Earnings calls are their highest-stakes communication event, and the transcript of that call becomes a permanent, searchable record that analysts, investors, journalists, and regulators can reference indefinitely.

The regulatory context matters here, but it’s important to be precise about what’s actually required. Regulation FD (Fair Disclosure), adopted by the SEC in 2000, provides that when an issuer discloses material nonpublic information to securities market professionals or shareholders, it must make public disclosure of that information simultaneously (if intentional) or promptly (if non-intentional). The SEC recommends that companies post a replay or transcript of earnings calls on their website for a reasonable period to support broad, non-exclusionary distribution. This is a best practice recommendation, not a mandate. But in practice, it means most public companies treat earnings call transcripts as compliance-adjacent documents.

The accuracy stakes are straightforward. A transcript that misquotes guidance figures, drops a material qualifier from a forward-looking statement, or misattributes a remark to the wrong executive creates selective disclosure risk. IR teams need transcripts with precise financial speaker labels, correct numerical rendering, and faithful reproduction of carefully worded language. Earnings call transcription accuracy isn’t a nice-to-have for these teams. It’s a core part of their fair disclosure posture.

Market Research Firms, Financial Publishers, and Compliance Teams

Beyond expert networks, data platforms, and IR departments, several other categories of financial organizations depend on high-quality transcription for distinct reasons.

Market research firms need searchable, quotable transcripts that analysts can cite in published reports. A misquoted figure or garbled company name in a research product damages credibility with the institutional investors who pay for it.

Financial publishers and news organizations need fast, accurate records of earnings calls and financial events to support real-time coverage. When a transcript misrenders a CEO’s comment about margin compression, that error can propagate into headlines and trading decisions before anyone catches it.

Compliance teams need auditable records with reliable speaker attribution for MNPI monitoring and regulatory review. If a compliance officer can’t trust that the transcript accurately reflects who said what and when, the entire audit trail breaks down.

Each of these use cases reinforces the same structural point. These are sophisticated organizations with high standards and clear requirements. The gap isn’t in their understanding of what they need. It’s in a vendor ecosystem that treats financial transcription as a commodity, applying the same models, the same QA processes, and the same workforce to financial audio that they use for every other industry. That mismatch is where quality breaks down.

Why Financial Audio Creates Higher Transcription Accuracy Demands

Financial audio isn’t just harder to transcribe than general conversation. It’s a categorically different problem. The error modes are different, the vocabulary is different, and the consequences of failure are orders of magnitude higher. A misheard word in a podcast episode is an annoyance. A misheard number in an earnings call is a material data error that can corrupt an investment model, mislead an analyst, or trigger a compliance review.

This distinction matters because most transcription vendors don’t build for it. Their ASR models, their training data, and their QA workflows are optimized for conversational English, not for the specific demands of financial audio. The result is a systematic accuracy gap on exactly the dimensions that financial transcription buyers care about most: numbers, speaker identity, and domain terminology.

Numerical Precision and Financial Terminology Errors

Numbers are the substance of financial communication. Revenue figures, margin percentages, guidance ranges, basis point movements, share counts, growth rates. When a CFO provides updated guidance, every digit matters. The difference between “15 basis points” and “50 basis points” isn’t a transcription typo. It’s a fundamentally different financial signal that changes how an analyst models the quarter.

Generic ASR systems struggle with numerical precision in financial contexts because the audio patterns for numbers are often acoustically similar. “Fifteen” and “fifty” sound alike at normal speaking speed, especially over a phone line. “Billion” and “million” can blur in compressed audio. And percentage figures spoken quickly (“point three percent” versus “three percent”) create opportunities for errors that a general-purpose model has no financial context to catch.

Inflxd’s benchmarking analysis of vendor transcripts surfaced exactly these patterns. In one sample, a vendor transcript rendered “29% market share” as “9% market share.” In another, “0.3 percentage points” became “3 percentage points,” a tenfold distortion. These aren’t edge cases. They’re the predictable output of models that lack financial domain training. The system doesn’t know that a 20-percentage-point swing in market share within a single sentence is implausible. It simply transcribes the phonemes it hears with no financial reasoning layer to flag the result.

Financial terminology compounds the problem. Terms like non-GAAP adjusted EBITDA, comparable store sales, and free cash flow conversion are standard vocabulary for anyone on an earnings call. But they’re not standard vocabulary for general-purpose speech recognition. Speechmatics has noted that financial jargon systematically confuses standard speech-to-text engines, with acronyms like VAT, SEC, and GAAP creating recurring errors. Industry-specific abbreviations (PPTA, IVT, CLIN) and pharmaceutical drug names on healthcare-focused calls push accuracy even lower. Without domain-trained language models, these terms get mangled into phonetic approximations that are useless to the analyst reading the transcript.

Speaker Attribution and Diarization in Multi-Party Financial Calls

A typical earnings call includes a CEO, a CFO, possibly a COO or division president, and a rotating cast of sell-side analysts asking questions. Who said what isn’t a formatting detail. It’s analytically essential. A bullish comment on margins attributed to the CFO carries different weight than the same comment attributed to an IR coordinator. Misattributing a single statement changes the analytical value of the entire transcript.

Generic speaker diarization (the process of segmenting audio by speaker) wasn’t built for this. It was built for two-person interviews and meeting recordings with clear turn-taking. Financial calls break those assumptions. Multiple speakers join from different phone lines with varying audio quality. Analyst Q&A segments involve rapid handoffs between participants who may speak only once. And operator interjections create additional segmentation challenges.

LSEG’s research on automatic transcript generation describes how the organization built a dedicated speaker diarization system specifically because generic solutions couldn’t reliably distinguish speakers in financial events. That’s a major financial data provider concluding that off-the-shelf diarization isn’t fit for purpose on their content.

Inflxd’s benchmarking reinforced this finding. In a 10-file sample of vendor transcripts, the analysis identified over 15 instances of “Unknown Analyst” labels where the speaker’s identity was available but unresolved, plus more than 5 cases of wrongly identified speaker names. For expert networks and data platforms whose clients depend on accurate speaker attribution, these aren’t minor formatting issues. They’re product defects.

Accents, Audio Quality, and Domain-Specific Vocabulary Challenges

Financial calls are global. A single earnings event might include a CEO based in Munich, a CFO calling from Singapore, and analysts dialing in from London, New York, and São Paulo. Each speaker brings a different accent, a different speaking cadence, and potentially a different phone line with different audio characteristics.

LSEG’s research notes a direct correlation between ASR performance and metadata factors including non-native English accents and audio equipment quality. This matters because generic ASR models are predominantly trained on clean, native-English audio. When the input deviates from that baseline (as it routinely does on international financial calls), accuracy degrades in ways that compound with the numerical and terminological challenges already described. A non-native speaker discussing basis point movements over a compressed VoIP connection is essentially a worst-case scenario for a general-purpose transcription model.

The transcription vendors serving expert networks and financial data platforms often don’t account for this reality in their models or their pricing. The result is that the hardest audio to transcribe (global, multi-accent, domain-dense financial content) gets processed through the same pipeline as the easiest. And the accuracy gap shows up in exactly the places where it matters most.

How Transcription Errors Compound Across Financial Workflows

A single transcription error is easy to dismiss. One misspelled company name, one swapped digit, one misattributed speaker turn. Taken in isolation, each looks like a minor quality issue. But financial transcripts don’t exist in isolation. They feed search indexes, AI summarization pipelines, compliance archives, and client-facing research products. Every downstream system that consumes the transcript inherits its errors. And that’s where the real cost lives: not in correcting one word, but in every decision, product, and compliance record built on flawed text.

This compounding dynamic is the hidden cost of commodity transcription. It’s also the reason that transcript accuracy for financial workflows can’t be evaluated at the word level alone. A transcript that scores 95% on raw word error rate can still be functionally broken if the 5% it gets wrong includes speaker names, financial figures, or entity references that anchor the entire document’s utility.

The Impact on Search, Summaries, and Transcript Libraries

Expert networks and financial data platforms invest heavily in searchable transcript libraries because those libraries compound in value over time. An analyst searching for every instance where a specific company’s management discussed pricing power needs to find every relevant transcript. That only works if the company name is spelled correctly, tagged consistently, and associated with the right speakers across every document.searchable transcript libraries because those libraries compound in value over time. An analyst searching for every instance where a specific company’s management discussed pricing power needs to find every relevant transcript. That only works if the company name is spelled correctly, tagged consistently, and associated with the right speakers across every document.

When a transcription vendor’s ASR model renders a company name phonetically (turning “Schlumberger” into “Slumber Jay,” for instance, or dropping a ticker symbol entirely), that transcript becomes invisible to the search index. It’s not that the content is wrong in a way someone would notice while reading. It’s that the content never surfaces when it should. The error is silent. The analyst doesn’t know what they didn’t find.

The same logic applies to topic segmentation and keyword metadata. If a transcript’s section headers are inaccurate, or if key financial terms are garbled into phonetic approximations, the entire document loses its navigability. For platforms building structured data products on top of transcript libraries, these aren’t cosmetic issues. They’re data integrity failures that degrade the product’s core value proposition.

How Poor Transcript Quality Degrades AI and NLP Products

The compounding problem intensifies when transcripts feed machine learning systems. Sentiment analysis, thematic modeling, and AI-generated summaries all depend on accurate input text. Flawed transcripts don’t just produce slightly less accurate AI outputs. They can produce directionally wrong ones.

Speaker misattribution is a clear example. If an AI summarization tool processes a transcript where the CFO’s cautious guidance language is attributed to an analyst, the summary will misrepresent who said what and strip the statement of its authority. The downstream consumer of that summary (an analyst, a portfolio manager, a client) receives a distorted picture of the call without any way to detect the distortion.

Sentiment analysis is equally vulnerable. Consider a British idiom like “it doesn’t half motivate you,” which means “it really motivates you.” A transcription error that renders this as “it doesn’t motivate” flips the sentiment signal entirely. A model trained to detect positive or negative management tone will register the opposite of what was actually communicated. These aren’t theoretical risks. They’re the predictable result of feeding domain-insensitive transcripts into NLP systems that trust their input.

The principle is straightforward: garbage in, garbage out. But in financial AI applications, the stakes are higher than in most domains because the outputs inform capital allocation decisions.

Transcript Accuracy and Compliance Risk

For expert networks managing MNPI (material nonpublic information) risk, transcript accuracy isn’t a quality preference. It’s a compliance requirement. Compliance teams rely on transcripts as auditable records of what was said, by whom, and when. If those records are inaccurate, the entire defensibility of a firm’s compliance program weakens.

A transcript with misidentified speakers makes it impossible to verify whether a specific individual disclosed information they shouldn’t have. A transcript that drops or alters a material qualifier (“we expect” becoming “we expect not,” or vice versa) creates a false record that could surface during a regulatory review.

For IR teams, the risk is different but equally concrete. Companies that post earnings call transcripts as part of their Regulation FD best practices are creating public records of what management communicated to the market. An inaccurate transcript posted in that context can create confusion about guidance figures, forward-looking statements, or strategic commitments. The SEC recommends (but doesn’t mandate) transcript posting as a method of broad, non-exclusionary disclosure. That recommendation makes accuracy a reputational and legal consideration, not just an operational one.

The through-line across all three of these domains is the same. Transcription errors don’t stay contained. They propagate. And the cost of that propagation is always larger than the cost of getting the transcript right in the first place.

Why AI-Only Transcription Falls Short for Financial Audio

There’s a reasonable assumption circulating among procurement teams right now: modern ASR has solved transcription. Whisper is open source. Cloud APIs are cheap. Turnaround is near-instant. Why pay for human review?

The assumption isn’t unreasonable for general audio. It is, however, wrong for financial content. And the evidence doesn’t come from transcription critics or legacy vendors defending manual workflows. It comes from the most sophisticated financial data organizations in the world, the ones that have invested the most in AI transcription and still pair it with human review for production use.

Where Automated Speech Recognition Breaks Down on Financial Content

The core problem with AI-only transcription on financial audio isn’t that the models are bad. It’s that they’re confidently wrong in ways that are invisible without domain expertise.

LSEG’s research on automatic transcript generation documents this directly. Their analysis found that confidence scores from deep neural networks tend to be overestimated. More specifically, incorrectly hypothesized words still carried mean confidence scores between 0.71 and 0.88. That’s a system telling you it’s 71% to 88% confident in output that’s actually wrong. For a downstream QA process relying on confidence thresholds to flag errors, this is a fundamental reliability problem. The errors that matter most are precisely the ones the model doesn’t flag.

The failure modes are systematic, not random. Domain vocabulary that doesn’t appear in general training data gets rendered as phonetic approximations. Numerical precision degrades on acoustically similar terms (“fifteen” vs. “fifty,” “billion” vs. “million”) with no financial reasoning layer to catch implausible results. Accented speech from global financial calls pushes accuracy lower. And compressed phone audio (the standard for expert network calls) compounds every one of these weaknesses simultaneously.

These aren’t limitations that scale or compute will fix in the near term. They’re architectural gaps. A general-purpose language model doesn’t know that a 20-percentage-point swing in market share within a single sentence is implausible. It doesn’t know that “EBITDA” isn’t three separate words. It processes phonemes without financial context, and the result is output that looks clean but contains errors in the exact places where accuracy matters most.

Why Human-in-the-Loop Review Remains Essential for Transcription Quality

Here’s what’s instructive: the organizations with the best financial ASR models in the world haven’t eliminated human review. They’ve built their production workflows around it. models in the world haven’t eliminated human review. They’ve built their production workflows around it.

Kensho (owned by S&P Global) trained its Scribe transcription system on over 100,000 hours of domain-specific financial audio. That’s arguably the deepest financial speech training dataset in existence. And Kensho still offers a human-in-the-loop option for production transcription, described as a professional transcription service tailored to business and finance, staffed by in-house transcriptionists and editors with extensive domain training. S&P Global, which holds its transcripts to a 99% accuracy SLA, concluded that even the best financial ASR model needs human verification to meet that bar.

LSEG reached the same conclusion through a different path. Their research describes a multi-pass workflow where ASR output flows to domain experts for manual correction before publication. The organization produces approximately 40,000 financial event transcripts per year. At that scale, they could have optimized for pure automation. Instead, they built a pipeline that treats AI as the first pass and human review as the quality gate.

This convergence isn’t coincidental. It reflects a structural reality about financial transcription: AI is excellent at generating a first draft in minutes, handling the bulk of conversational content accurately, and reducing the labor required for production. But verifying numbers, resolving ambiguous audio, correcting speaker attribution, and ensuring domain terminology is rendered accurately all require contextual reasoning that current ASR models can’t reliably perform.

The right framing isn’t “AI vs. human.” It’s AI for speed, human review for accuracy. That hybrid architecture is what every serious player in financial transcription has converged on. Not as a temporary compromise while the models improve, but as the production standard for content where errors carry real consequences.

To be clear: generic AI-only transcription is perfectly adequate for internal notes, rough first drafts, and non-critical use cases. For production-grade financial transcripts that feed client deliverables, compliance records, searchable data products, and AI pipelines, human review isn’t a luxury. It’s the quality gate that separates usable financial data from a liability.

The transcription vendors that serve expert networks and financial data platforms with standard AI-only pipelines aren’t delivering a modern solution. They’re delivering an incomplete one. And the gap between their output and what these firms’ clients actually need is where accuracy, trust, and product quality break down.

What to Look for in a Financial Transcription Vendor

Most vendor evaluations for financial transcription are broken before they start. The RFP goes out with the same criteria you’d use for any commodity service: per-minute rate, turnaround time, file format options. Maybe a checkbox for “AI-powered.” That framework works for general transcription. It doesn’t work when the output feeds compliance archives, client-facing research products, and AI pipelines where a single misrendered number can corrupt downstream analysis.

Evaluating a financial transcription vendor is a data infrastructure decision, not a procurement exercise. Here’s what actually matters.

Evaluating Transcription Accuracy Claims and Domain Expertise

Every vendor claims high accuracy. The number itself is almost meaningless without context. A vendor reporting “99% accuracy” on clean, single-speaker, native-English podcast audio is making a fundamentally different claim than one reporting 99% on multi-accent earnings calls dense with financial terminology. The first number is easy to hit. The second is exceptionally hard.

When evaluating accuracy, ask these questions:

What content was the accuracy figure measured on? If the benchmark dataset doesn’t resemble your actual audio (expert calls, earnings events, multi-speaker financial discussions), the number isn’t relevant to your use case., earnings events, multi-speaker financial discussions), the number isn’t relevant to your use case.

Does accuracy include speaker identification? Word-level correctness is necessary but not sufficient. If the transcript gets every word right but attributes the CFO’s guidance to an analyst, it’s functionally broken. Ask whether the vendor measures speaker attribution accuracy separately.

How is accuracy measured? Word error rate (WER) is the standard metric, but it treats all errors equally. A misspelled filler word and a wrong revenue figure count the same. Ask whether the vendor tracks domain-critical accuracy: numbers, entity names, and financial terminology specifically.

Does the vendor maintain domain-specific glossaries and entity libraries? Financial audio requires continuously updated reference data for company names, ticker symbols, executive names, and industry terminology. Without these, even a good ASR model will phonetically approximate terms it hasn’t seen.

What’s the review process? This is where you separate commodity vendors from production-grade ones. A useful maturity framework: Level 1 vendors deliver AI-only output with no human review. Level 2 vendors pair ASR with trained financial editors in a human-in-the-loop workflow. Level 3 vendors integrate into your platform, maintain auditable QA processes, and continuously refine their models against your specific content. S&P Global’s 99% accuracy SLA and LSEG’s multi-pass workflow (ASR followed by domain expert review) reflect Level 3 thinking. That’s the bar.

Security Posture, Auditability, and Compliance Readiness

For organizations handling MNPI-sensitive content, security isn’t a checkbox on a vendor assessment form. It’s a structural requirement that should shape how you evaluate every aspect of the vendor relationship.

Start with the basics: encryption at rest and in transit, role-based access controls, and clearly defined data retention and deletion policies. Then go deeper. Does the vendor operate a closed-loop platform that prevents unauthorized audio downloads? Or does your sensitive financial audio sit on a shared drive accessible to editors working across dozens of industries?

Certifications matter, but they’re not sufficient on their own. SOC 2 Type II compliance and GDPR alignment demonstrate that a vendor has invested in security infrastructure. But ask how those standards apply to the human review layer specifically. Do editors sign NDAs? Do they undergo security training? Are they in-house staff or freelancers sourced from global marketplaces with minimal vetting?

Auditability is equally important. Can the vendor provide a complete audit trail showing who accessed a file, when edits were made, and what QA steps were completed? For compliance teams that need to demonstrate the integrity of their transcript records during a regulatory review, this isn’t optional.

Turnaround, Integration, and Pricing Transparency for Financial Transcription Services

Expert networks processing thousands of calls per month can’t afford a manual upload-and-download workflow. Ask whether the vendor offers API integration with your existing platforms. Can transcripts flow directly into your content management system, search index, or client delivery pipeline without manual intervention? At scale, integration isn’t a nice-to-have. It’s the difference between a vendor that fits your operations and one that creates a bottleneck inside them.

Turnaround commitments should come with a clear accuracy tradeoff. A vendor promising two-hour delivery with human review on a 90-minute earnings call is either cutting corners on QA or staffing at a level that deserves scrutiny. Ask what happens to accuracy at the fastest turnaround tier.

Finally, pricing. Beware of credit-based systems with expiring credits, opaque enterprise contracts that bundle services you don’t need, and per-minute rates that don’t reflect the actual cost of production-grade output. A low per-minute rate from a vendor delivering AI-only transcripts with no domain review isn’t a bargain. It’s a different product entirely. Ask for clear, per-minute pricing with defined SLAs that specify accuracy targets, turnaround windows, and what happens when the vendor misses them.

The vendors that can answer all of these questions clearly are the ones treating financial transcription as data infrastructure. The ones that can’t are selling commodity text generation with a financial label on it.

Financial Transcription as Data Infrastructure: The Strategic Advantage

The argument across this entire piece reduces to a single claim: financial transcription isn’t a line item. It’s infrastructure. And the organizations that treat it accordingly (investing in accuracy, speaker attribution, structured metadata, and measurable quality benchmarks) will compound that advantage every quarter as their transcript libraries grow, their AI systems improve, and their compliance posture strengthens.

The shifts are clear. From commodity procurement to infrastructure investment. From AI-only pipelines to hybrid workflows with human review as the quality gate. From word-level accuracy metrics to end-to-end data quality that encompasses speaker identification, numerical precision, formatting, and downstream integration. From opaque vendor claims to domain-specific benchmarks measured on content that actually resembles your audio. These aren’t incremental improvements. They’re structural decisions that determine whether your transcripts function as reliable financial data or as a growing source of silent risk.

From Commodity Vendor to Strategic Transcription Partner

The commodity transcription vendor ecosystem was built for breadth, not depth. It serves dozens of industries with the same models, the same QA processes, and the same workforce. That’s a rational business model for general audio. It’s not equipped to serve expert networks processing thousands of domain-dense calls per month, or financial data platforms whose transcripts feed search indexes, NLP pipelines, and client-facing products where a single entity error degrades the entire dataset.

The gap between what these organizations need and what commodity vendors deliver isn’t closing. It’s widening. As transcripts increasingly serve as inputs to AI summarization, sentiment analysis, and compliance monitoring systems, the cost of upstream errors compounds faster. Every downstream system that trusts the transcript inherits its flaws.

How INFLXD Approaches Financial Transcription Quality

INFLXD exists because the vendor ecosystem wasn’t meeting the quality bar that expert networks and financial data platforms require. It’s built specifically for this content and these clients. content and these clients.

The model is hybrid by design: AI generates the first pass, then trained financial editors apply domain-specific glossaries, verify numerical accuracy, resolve speaker attribution, and enforce structured formatting through a multi-stage QA process. Inflxd doesn’t claim perfection. It commits to measurable, domain-specific quality benchmarks and continuous improvement against the actual audio its clients produce. Enterprise-grade security (encryption, access controls, audit trails) is foundational, not an add-on.) is foundational, not an add-on.

The organizations that invest in transcript quality infrastructure now won’t just have better transcripts. They’ll have better data, better AI outputs, better compliance records, and a structural advantage that compounds with every call they process.

Why the Right Financial Transcription Partner Changes Everything

The organizations that win in financial data don’t treat transcription as an afterthought. They treat it as the first layer of their data infrastructure. Every section of this piece points to the same underlying reality: the gap between generic transcription output and production-grade financial transcripts isn’t a minor quality variance. It’s a structural risk that compounds across every downstream system it touches.

Financial transcription services exist because financial audio is a fundamentally different problem. The vocabulary is specialized. The entities are dense and ambiguous. The numbers carry material weight. Speaker attribution matters for compliance and context alike. And every error that slips through doesn’t just sit in a document. It propagates into search indexes, NLP pipelines, AI-generated summaries, and client-facing products.

The firms that understand this (expert networks processing thousands of calls, financial data platforms building searchable transcript libraries, IR teams distributing earnings content to the market) aren’t looking for the cheapest per-minute rate. They’re looking for a partner with domain-specific accuracy models, human-in-the-loop review, measurable quality benchmarks, and the security posture to handle sensitive financial content.

That’s exactly what INFLXD builds for. We don’t sell generic transcription. We deliver transcript quality infrastructure purpose-built for financial audio, with domain-trained models, rigorous HITL workflows, and accuracy benchmarks calibrated to the terminology, entity density, and speaker complexity that define this industry.

See Where Your Transcripts Actually Stand

If you’re an expert network, financial data platform, or any firm whose products depend on transcript accuracy, here’s a concrete next step. Run your transcripts through INFLXD's accuracy benchmarking process. We’ll measure your current vendor output against domain-specific quality standards and show you exactly where errors are entering your workflow. No guesswork. No generic audits. Just a clear, data-driven picture of what’s reaching your clients.

SHARE THIS ARTICLE:

Sign up for INFLXD's financial data industry insights

Data monetisation strategies, AI product trends, and what's actually working — for expert networks and financial data providers.

By submitting your email, you agree to the Terms of Use and Privacy Policy.

More Blogs

Beatrice Eyales

INFLXD

Daniel Ainge

INFLXD

Daniel Ainge

INFLXD

SOLUTIONS

RESOURCES

COMPANY